April 2026 Product Update

It's been a busy few months at Vast.ai, with updates spanning performance and reliability improvements, new templates and guides, and a major step forward for serverless deployment workflows.

NVIDIA Cloud GPU Updates

Managing large numbers of rented instances is now faster and more responsive, thanks to pagination and performance improvements when fetching instance data. Monitoring serverless workloads is easier as well, with improved serverless metric visualization so you can track usage and performance at a glance.

We've also updated the Host Setup page with clearer instructions for new hosts, and extended two-factor authentication via SMS to support additional countries.

Highlighted Feature: Deploy Serverless GPU Endpoints from Python

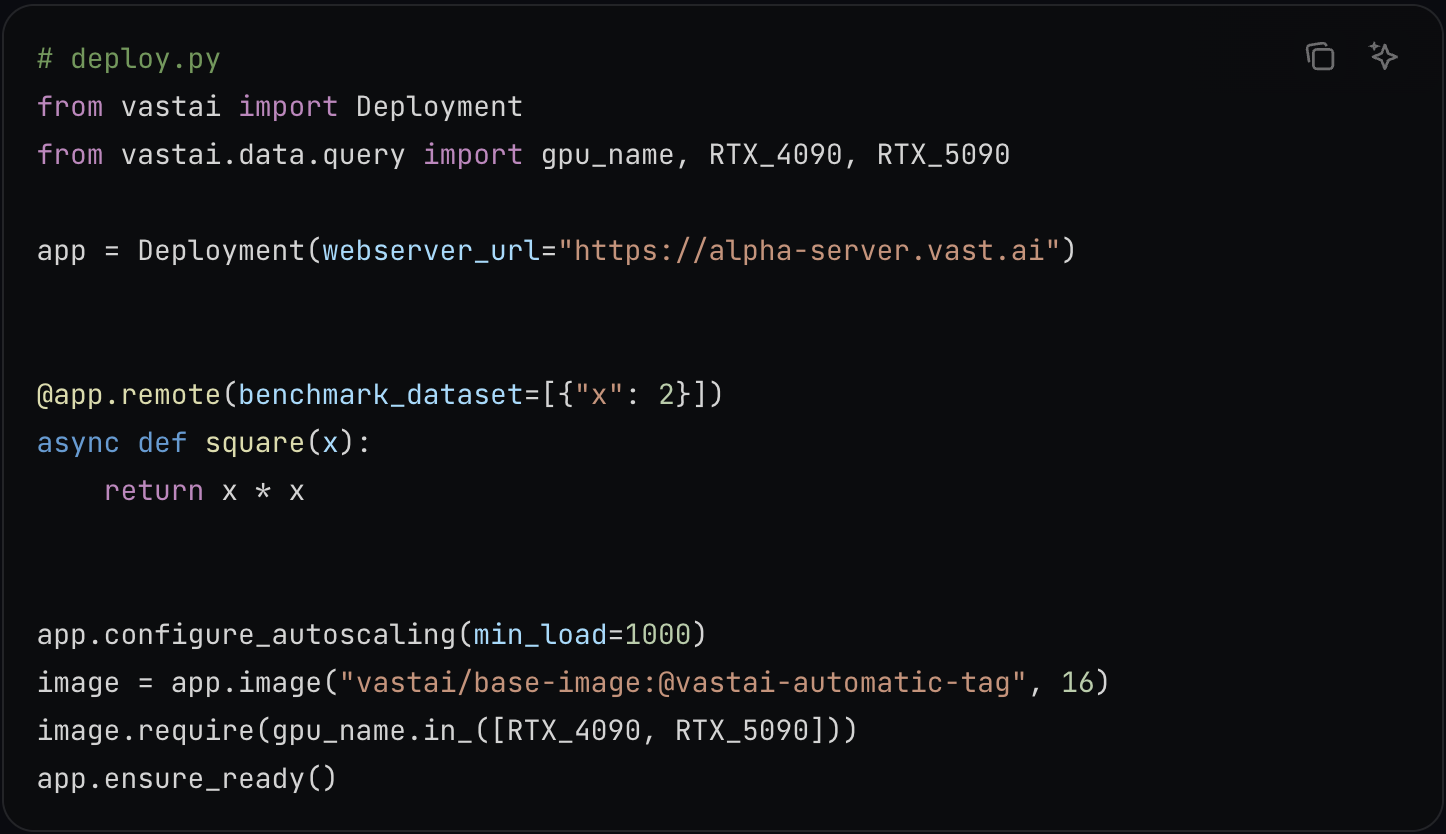

You can now create, configure, and manage serverless GPU endpoints directly from code, without relying on the dashboard.

With the open beta of the Vast SDK deployment workflow, you can define Docker images, apt packages, autoscaling settings, and more entirely in Python. The goal is simple: one install, one deployment flow, and a much faster path from local code to a live serverless endpoint.

Where this gets especially powerful is the @remote programming model. Instead of building HTTP wrappers and managing infrastructure by hand, you can decorate a Python function and call it like local code while Vast handles the serverless execution layer underneath.

This is the fastest way to spin up serverless GPUs at Vast's price-performance point, and it's available now to all users as part of the open beta.

Everything you need is in the deployment docs and Python examples.

New Templates and Guides

We also shipped a fresh round of templates, model pages, and deployment guides to help you get up and running faster across training, inference, multimodal workflows, and migrations.

New Templates

- Unsloth Studio - LLM fine-tuning and inference environment.

- GLM 5 - 744B MoE model for agentic reasoning, coding, and tool use.

- Kimi K2.5 - Next-generation multimodal agentic model.

- vLLM Omni - Multimodal inference engine.

- Voicebox TTS - Multi-model text-to-speech with voice cloning.

- LTX 2.3 via ComfyUI - Video generation workflow.

New Guides

- Budget-Friendly Alternative to Claude Code - Overnight Ralph Loop

- OpenClaw AI Assistant with vLLM on Vast.ai

- Autonomous AI Research with Autoresearch on Vast.ai

- Migrate from Runpod to Vast.ai

Other Improvements

This update also brings a number of platform-level changes, including BitPay support for crypto payments, serverless OpenAI-compatible endpoints, pagination for large rented-instance result sets, and a long list of quality-of-life and reliability fixes.

Our Commitment

As we continue to improve platform performance and expand support for deployment workflows, our focus remains on making AI infrastructure easier to use at scale.

Need assistance? Reach out anytime at support@vast.ai or join our Discord server for tips, discussion, and the latest updates as they happen.

Change Log

New Features

- BitPay payment gateway for crypto payments, including Lightning Network support, flat fees, and refunds across hundreds of wallets including Coinbase.

- Serverless OpenAI API-compatible endpoints released.

- Serverless metric visualization improvements.

- 2FA SMS support expanded to many additional countries.

- Pagination and performance improvements when fetching large numbers of rented instances.

- Host Setup page updated with improved instructions for new hosts.

Issues Resolved

- Local volume deletion issue resolved.

- Copy error visibility improved.

- Copy progress bar issue resolved.

- Disk quota issues resolved.

- Machines missing geolocation resolved.

- Open button token persistence after instance recreation improved.

- Missing DLPerf on the instance page resolved.

- Various security improvements.

- Various quality-of-life improvements.

- Various serverless edge-case scaling improvements.

- Host CLI schedule maintenance command issue resolved.

Feature Changes

- Coinbase is sunsetting Coinbase Commerce on March 31, so payment support is moving accordingly.

API Changes

- Upcoming breaking change:

get instanceswill soon return paginated results, with responses limited to a maximum of 25 instances per request. Update API, CLI, and SDK integrations to handle pagination. - Deprecated:

disable_bundlingforsearch offerswill be removed from the API, CLI, and SDK. - Serverless SDK updated for enhanced security.

Updated Templates

- PyTorch

- Open WebUI

- Ollama

- Ostris Toolkit

- ComfyUI

- Llama.cpp (ssl)

- SGLang (onstart script fixed)

- Pinokio (v6.x)

New GPUs

- RTX PRO 5000

New Blog Posts

- Affordable Claude Code Alternative: Run Autonomous Coding Agents on Vast.ai

- Vast.ai Named Among Fastest Growing Vendors by Ramp and Brex

- Liquid AI's LFM2 Just Dropped - Here's How to Run It on Vast.ai

- Running Qwen 3.5 Medium Models on Vast.ai

- Vast.ai GPUs Can Now Be Rented Through SkyPilot

- 7 Popular Model Templates for AI Workloads on Vast.ai Right Now

- Running Nemotron-Cascade-2 on Vast.ai